This case study describes the complete steps from root cause analysis to resolution of a native memory leak (PermGen space) problem experienced with a Weblogic 10.0 environment using Sun JDK 1.5.0.

This case study will show how a simple tuning mistake of the JVM settings can have severe consequences as well as how the Java Heap Dump analysis can sometimes help pinpoint root cause of native memory leak involving class loaders.

Environment specifications

Troubleshooting tools

Problem overview

This problem was brought to our attention because it was becoming unmanageable for the support team to support this production environment as it was constantly failing with this OutOfMemoryError. A task force was assigned to deep dive into the root cause and provide a permanent solution.

Problem mitigation did involve restarting all the Weblogic servers every 2 days.

Gathering and validation of facts

As usual, a Java EE problem investigation requires gathering of technical and non technical facts so we can either derived other facts and/or conclude on the root cause. Before applying a corrective measure, the facts below were verified in order to conclude on the root cause:

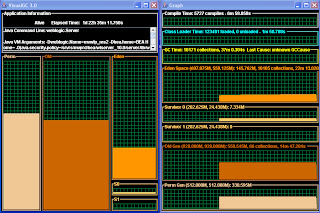

PermGen space monitoring was performed via the Sun VisualGC 3.0 monitoring tool.

http://java.sun.com/performance/jvmstat/visualgc.html

You can see below the evolution the of permanent space leak over a 24 hours period.

A few JVM Heap Dumps (java_pid<xyz>.hprof format) were generated by the Sun JVM following some occurrences of the OutOfMemoryError.

Please note that a Heap Dump represents a snapshot of the Java Heap. PermGen is not normally part of a JVM heap dump but it still provide some information on how many classes were loaded, how many class loaders etc. as a pointer (stub) to the real native memory object stored in the native memory space.

The analysis did reveal:

Following the findings from the Heap Dump analysis, a review of the JVM start-up arguments was done in order to determine any configuration problem. We found immediately a suspected problem with one parameter: -Xnoclassgc.

By default the JVM unloads a class from the PermGen space when there are no live instances of that class left, but this can degrade performance in some scenarios. Turning off class garbage collection eliminates the overhead of loading and unloading the same class multiple times.

If a class is no longer needed, the space that it occupies on the heap is normally used for the creation of new objects. However, for an application that handles requests by creating a new instance of a class, the normal class garbage collection will clean up this class by freeing the PermGen space it occupied, only to have to re-instantiate the class when the next request comes along. In this situation you might want to use this option to disable the garbage collection of classes.

However, in the Java EE world, this is normally a bad idea since many applications creates classes dynamically, or uses reflection, because for this type of application, the use of this option can lead to native memory leak and exhaustion.

The analysis of the Heap Dump and JVM arguments review did confirm the following root cause:

2 areas were looked at during the resolution phase:

The removal of this JVM argument did provide an instant relief with no negative side effect. The VisualGC snapshots did confirm that the PermGen space was now quite stable and stabilizing around the 200 MB figures and no longer leaking.

This case study will show how a simple tuning mistake of the JVM settings can have severe consequences as well as how the Java Heap Dump analysis can sometimes help pinpoint root cause of native memory leak involving class loaders.

Environment specifications

· Java EE server: Weblogic 10.0

· OS: Solaris 10

· JDK: Sun JDK 1.5.0_14 (-XX:PermSize=512m -XX:MaxPermSize=512m -XX:+UseParallelGC -Xms1536m -Xmx1536m -Xnoclassgc -XX:+HeapDumpOnOutOfMemoryError)

· Platform type: Ordering Portal

Troubleshooting tools

· JVM Heap Dump (hprof format)

· VisualGC 3.0

· Eclipse Memory Analyser 0.6.0.2 (via IBM Support Assistant 4.1)

Problem overview

· Problem type: java.lang.OutOfMemoryError: PermGen space

This problem was brought to our attention because it was becoming unmanageable for the support team to support this production environment as it was constantly failing with this OutOfMemoryError. A task force was assigned to deep dive into the root cause and provide a permanent solution.

Problem mitigation did involve restarting all the Weblogic servers every 2 days.

Gathering and validation of facts

As usual, a Java EE problem investigation requires gathering of technical and non technical facts so we can either derived other facts and/or conclude on the root cause. Before applying a corrective measure, the facts below were verified in order to conclude on the root cause:

· Recent change of the affected platform? No

· Any recent traffic increase to the affected platform? Yes, the traffic of the environment has increased over the last few months

· Since how long this problem has been observed? This problem is affecting the platform since about 1 year

· Is the permanent generation space growing suddenly or over time? It was observed using VisualGC tool that the PermGen space is growing on a regular / daily basis

· Did a restart of the Weblogic server resolve the problem? No, restarting the Weblogic server is only used as a mitigation strategy to prevent the OutOfMemoryError

· Conclusion #1: The problem is related to a memory leak of the Sun JVM permanent generation space (PermGen)

· Conclusion #2: The recent load increase seems responsible (trigger) of the increased need to restart the Weblogic servers

PermGen memory monitoring (via VisualGC)

PermGen space monitoring was performed via the Sun VisualGC 3.0 monitoring tool.

http://java.sun.com/performance/jvmstat/visualgc.html

You can see below the evolution the of permanent space leak over a 24 hours period.

- December 24, 2010 @00:00 AM : PermGen @255MB

- December 24, 2010 @9:00 PM : PermGen @325MB

- December 25, 2010 @5:00 AM : PermGen @330MB

The review of the VisualGC data was quite conclusive on the fact that our application was leaking the PermGen space on a regular basis. Now the question was why.

JVM Heap Dump

A few JVM Heap Dumps (java_pid<xyz>.hprof format) were generated by the Sun JVM following some occurrences of the OutOfMemoryError.

Please note that a Heap Dump represents a snapshot of the Java Heap. PermGen is not normally part of a JVM heap dump but it still provide some information on how many classes were loaded, how many class loaders etc. as a pointer (stub) to the real native memory object stored in the native memory space.

Heap Dump analysis

The analysis did reveal:

· High amount of instances of java.lang.Class loaded by the system class loader (leak suspect #2). These classes did not appear to be referenced anymore.

JVM memory arguments review

Following the findings from the Heap Dump analysis, a review of the JVM start-up arguments was done in order to determine any configuration problem. We found immediately a suspected problem with one parameter: -Xnoclassgc.

By default the JVM unloads a class from the PermGen space when there are no live instances of that class left, but this can degrade performance in some scenarios. Turning off class garbage collection eliminates the overhead of loading and unloading the same class multiple times.

If a class is no longer needed, the space that it occupies on the heap is normally used for the creation of new objects. However, for an application that handles requests by creating a new instance of a class, the normal class garbage collection will clean up this class by freeing the PermGen space it occupied, only to have to re-instantiate the class when the next request comes along. In this situation you might want to use this option to disable the garbage collection of classes.

However, in the Java EE world, this is normally a bad idea since many applications creates classes dynamically, or uses reflection, because for this type of application, the use of this option can lead to native memory leak and exhaustion.

Root cause

· The addition of the –Xnoclassgc flag did disable the Sun JVM PermGen garbage collection and was leaking the PermGen space since our application rely on frameworks using frequent dynamic class loading and Java Reflection.

· It was determined that a human error did introduce this parameter during the load testing phase (performed about 1 year ago) during an attempt to tune the garbage collection process of the Java Heap.

Solution and tuning

· Removal of the –Xnoclassgc flag from one of our production servers along with monitoring.

· Ensure that re-enabling the Class garbage collector does not create any major overhead on the garbage collection and/or CPU utilization.

The removal of this JVM argument did provide an instant relief with no negative side effect. The VisualGC snapshots did confirm that the PermGen space was now quite stable and stabilizing around the 200 MB figures and no longer leaking.

Recommendations and best pratices

· When facing OutOfMemoryError problems, always start with the basics e.g. first review your JVM start-up arguments for any obvious tuning problem before moving to deep dive analysis.

· Take advantage of the Elipse Memory Analyser heap dump analysis tool as it may be able to help you pinpoint certain types of native memory leak

4 comments:

This is simply awesome man, no other word to describe because of step by step explanation of java.lang.OutOfMemoryError: PermGen, but I would say you rather turn out to be lucky to get escaped easily by this :)

Thanks for your comments.

hehe you are correct, from a root cause and resolution perspective we were quite lucky since the solution was simple (removal of –Xnoclassgc argument) vs. more complex PermGen space leak problems.

Thanks.

P-H

Hi PIERRE-HUGUES,

Can -XX:MaxGCPauseMillis=50 be reason for java.lang.OutOfMemoryError: Java heap space

Other related parameters: -XX:NewRatio=2 -Xms1024m -Xmx4096m -XX:MaxPermSize=256m

Hi Vladimir,

This parameter can only cause the Full GC to kickoff more often but should not lead to OOM. Based on your settings, you have a max heap size of 4 GB. NewRatio=2 means that 1/3 of your Heap Size will be allocated for the YoungGen space, quite typical.

Regarding your problem I recommend the following:

- Enable verbose:GC so you can first identify the Java heap depletion pattern e.g. sudden depletion vs. capacity problem vs. memory leak.

You can read this article on how to analyze the verbose:gc output.

## Blog and YouTube References

http://javaeesupportpatterns.blogspot.ca/2011/10/verbosegc-output-tutorial-java-7.html

http://www.youtube.com/watch?v=7CJCMKNoICE

Regards,

P-H

Post a Comment